Domain and Task-Focused Example Selection for Data-Efficient Contrastive Medical Image Segmentation

Tyler B. Ward1 , Aaron Moseley1, Abdullah-Al-Zubaer Imran1

, Aaron Moseley1, Abdullah-Al-Zubaer Imran1

1: Department of Computer Science, University of Kentucky

Publication date: 2026/03/13

https://doi.org/10.59275/j.melba.2026-3cg7

Abstract

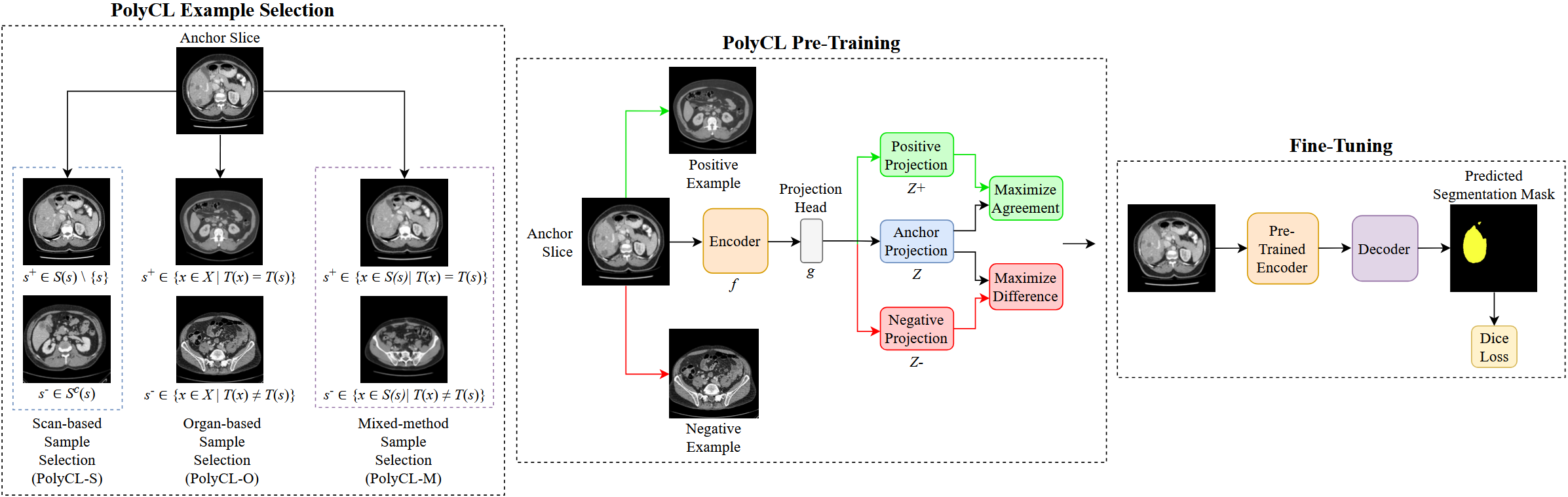

Segmentation is one of the most important tasks in the medical imaging pipeline as it influences a number of image-based decisions. To be effective, fully supervised segmentation approaches require large amounts of manually annotated training data. However, the pixel-level annotation process is expensive, time-consuming, and error-prone, hindering progress and making it challenging to perform effective segmentations. Therefore, models must learn efficiently from limited labeled data. Self-supervised learning (SSL), particularly contrastive learning via pre-training on unlabeled data and fine-tuning on limited annotations, can facilitate such limited labeled image segmentation. To this end, we propose a novel self-supervised contrastive learning framework for medical image segmentation, leveraging inherent relationships of different images, dubbed PolyCL. Without requiring any pixel-level annotations or unreasonable data augmentations, our PolyCL learns and transfers context-aware discriminant features useful for segmentation from an innovative surrogate, in a task-related manner. Additionally, we integrate the Segment Anything Model (SAM) into our framework in two novel ways: as a post-processing refinement module that improves the accuracy of predicted masks using bounding box prompts derived from coarse outputs, and as a propagation mechanism via SAM 2 that generates volumetric segmentations from a single annotated 2D slice. Experimental evaluations on three public computed tomography (CT) datasets demonstrate that PolyCL outperforms fully-supervised and self-supervised baselines in both low-data and cross-domain scenarios. Our code is available at https://github.com/tbwa233/PolyCL

Keywords

Computed tomography · contrastive learning · medical image segmentation · self-supervised learning · SAM

Bibtex

@article{melba:2026:002:ward,

title = "Domain and Task-Focused Example Selection for Data-Efficient Contrastive Medical Image Segmentation",

author = "Ward, Tyler B. and Moseley, Aaron and Imran, Abdullah-Al-Zubaer",

journal = "Machine Learning for Biomedical Imaging",

volume = "2026",

issue = "March 2026 issue",

year = "2026",

pages = "21--36",

issn = "2766-905X",

doi = "https://doi.org/10.59275/j.melba.2026-3cg7",

url = "https://melba-journal.org/2026:002"

}

RIS

TY - JOUR

AU - Ward, Tyler B.

AU - Moseley, Aaron

AU - Imran, Abdullah-Al-Zubaer

PY - 2026

TI - Domain and Task-Focused Example Selection for Data-Efficient Contrastive Medical Image Segmentation

T2 - Machine Learning for Biomedical Imaging

VL - 2026

IS - March 2026 issue

SP - 21

EP - 36

SN - 2766-905X

DO - https://doi.org/10.59275/j.melba.2026-3cg7

UR - https://melba-journal.org/2026:002

ER -