Don’t Mind the Gaps: Implicit Neural Representations for Resolution-Agnostic Retinal OCT Analysis

Bennet Kahrs1,2 , Julia Andresen2

, Julia Andresen2 , Fenja Falta2

, Fenja Falta2 , Monty Santarossa3

, Monty Santarossa3 , Heinz Handels1,2

, Heinz Handels1,2 , Timo Kepp1

, Timo Kepp1

1: German Research Center for Artificial Intelligence, Luebeck, DE, 2: Institute of Medical Informatics, University of Luebeck, Luebeck, DE, 3: Multimedia Information Processing Group, Kiel University, Kiel, DE

Publication date: 2026/03/11

https://doi.org/10.59275/j.melba.2026-38ba

Abstract

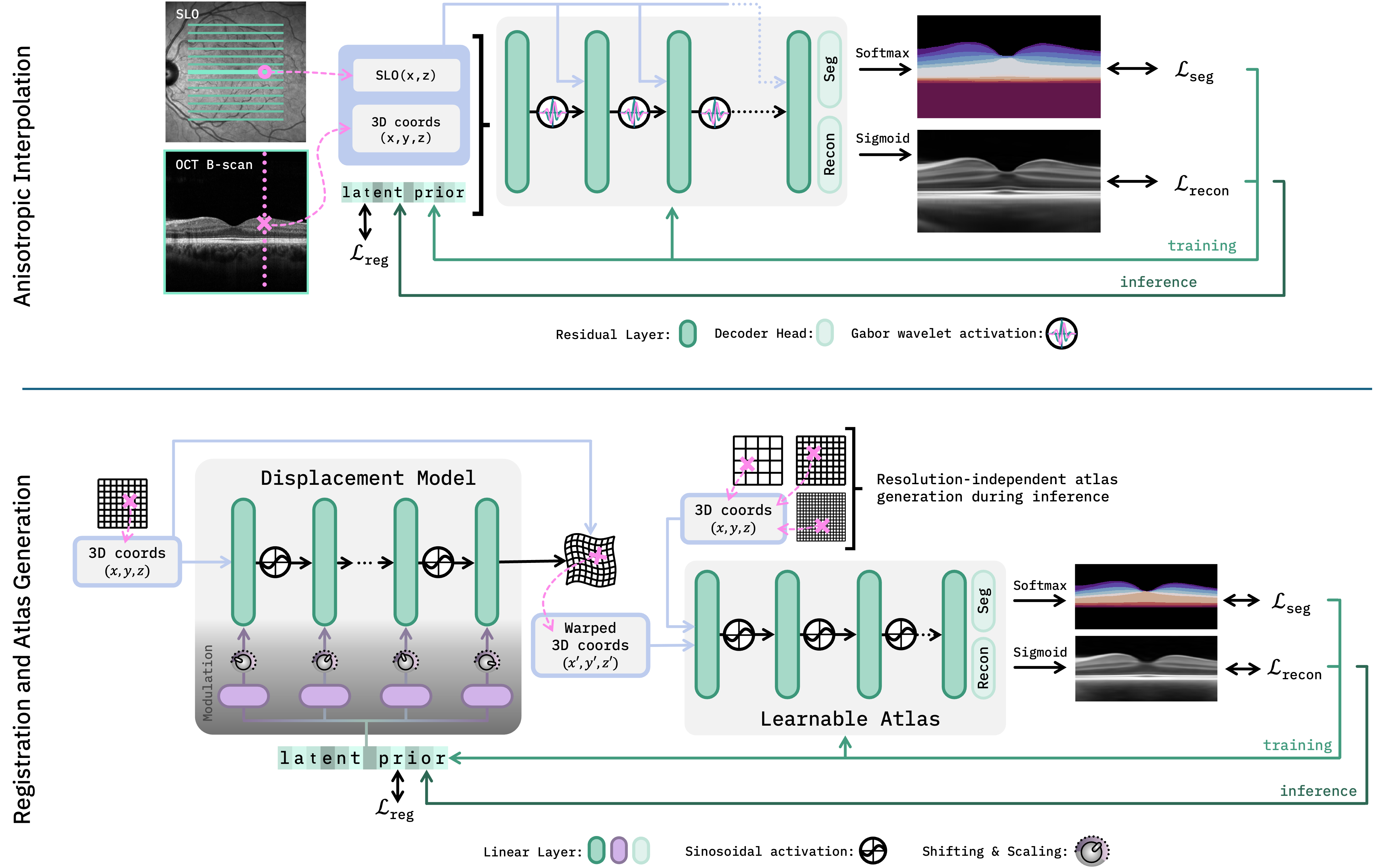

Routine clinical imaging of the retina using optical coherence tomography (OCT) is performed with large slice spacing, resulting in highly anisotropic images and a sparsely scanned retina. Most learning-based methods circumvent the problems arising from the anisotropy by using 2D approaches rather than performing volumetric analyses. These approaches inherently bear the risk of generating inconsistent results for neighboring B-scans. For example, 2D retinal layer segmentations can have irregular surfaces in 3D. Furthermore, the typically used convolutional neural networks are bound to the resolution of the training data, which prevents their usage for images acquired with a different imaging protocol. Implicit neural representations (INRs) have recently emerged as a tool to store voxelized data as a continuous representation. Using coordinates as input, INRs are resolution-agnostic, which allows them to be applied to anisotropic data. In this paper, we propose two frameworks that make use of this characteristic of INRs for dense 3D analyses of retinal OCT volumes. 1) We perform inter-B-scan interpolation by incorporating additional information from en-face modalities, that help retain relevant structures between B-scans. 2) We create a resolution-agnostic retinal atlas that enables general analysis without strict requirements for the data. Both methods leverage generalizable INRs, improving retinal shape representation through population-based training and allowing predictions for unseen cases. Our resolution-independent frameworks facilitate the analysis of OCT images with large B-scan distances, opening up possibilities for the volumetric evaluation of retinal structures and pathologies. Our code is available at https://github.com/tkepp/ResA-OCT

Keywords

Optical Coherence Tomography · Implicit Neural Representation · B-scan Interpolation · Retinal Atlas · Multi-modal Analysis · Image Registration

Bibtex

@article{melba:2026:004:kahrs,

title = "Don’t Mind the Gaps: Implicit Neural Representations for Resolution-Agnostic Retinal OCT Analysis",

author = "Kahrs, Bennet and Andresen, Julia and Falta, Fenja and Santarossa, Monty and Handels, Heinz and Kepp, Timo",

journal = "Machine Learning for Biomedical Imaging",

volume = "2026",

issue = "MELBA–BVM 2025 Special Issue",

year = "2026",

pages = "59--77",

issn = "2766-905X",

doi = "https://doi.org/10.59275/j.melba.2026-38ba",

url = "https://melba-journal.org/2026:004"

}

RIS

TY - JOUR

AU - Kahrs, Bennet

AU - Andresen, Julia

AU - Falta, Fenja

AU - Santarossa, Monty

AU - Handels, Heinz

AU - Kepp, Timo

PY - 2026

TI - Don’t Mind the Gaps: Implicit Neural Representations for Resolution-Agnostic Retinal OCT Analysis

T2 - Machine Learning for Biomedical Imaging

VL - 2026

IS - MELBA–BVM 2025 Special Issue

SP - 59

EP - 77

SN - 2766-905X

DO - https://doi.org/10.59275/j.melba.2026-38ba

UR - https://melba-journal.org/2026:004

ER -